Here’s a mismatch of a statistic: back in 2013, a Fordham Law School study found that 95 percent of districts used cloud services for various reasons, including data mining of student performance numbers—but less than 7 percent of the district-vendor contracts restricted the sale or marketing of student information by vendors. Fast-forward to 2016, and privacy issues are still alive and well when it comes to the vendor-district relationship.

Perhaps the age-old PSA question of “It’s 10 PM—do you know where your children are?” should be readapted for schools as “It’s Tuesday—do you know where your students’ data is?”

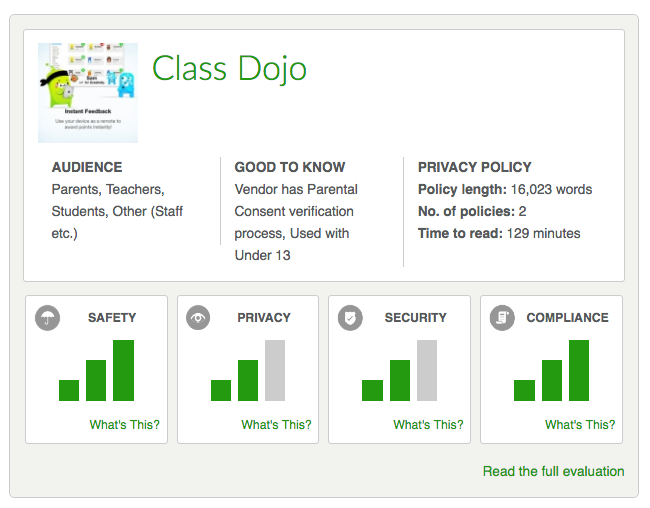

When schools and districts choose edtech software, the process of evaluating products for their privacy policies and practices can be time-consuming or downright confusing. But today, San Francisco-based nonprofit Common Sense Education has launched what it hopes will provide some insights and resources for educators in these predicaments. Specifically, it’s the K12 Edtech Privacy Evaluation Platform, with privacy evaluations of thirty popular edtech apps, from ClassDojo to Code.org to Actively Learn.

How the Evaluations Take Place

Bill Fitzgerald, Director of Common Sense’s Privacy Review Program, explains that in creating the platform, the privacy evaluations came out of a five-step process, all supported by the 95-member consortium of school and district partners. “We’ve highlighted the potential for how an application could not be used safely,” he told EdSurge in an interview.

First off, Fitzgerald and his team grabbed plain-text company privacy policies—annotating and marking them up against twelve privacy categories. “We wanted to map paragraphs of terms to one or more of these principles,” he says.

Next, Common Sense ran the terms through a “transparency evaluation,” identifying the thoroughness of the policies, and pulling out strengths and weaknesses. They asked questions like “Do the terms contain a list of third-party services used to run the platform?” and “Do the terms include how data will be handled in terms of bankruptcy?”

Finally, the team created summaries of their evaluations, and organized the pros and potential risks for each tools into four categories:

1) Privacy: Such as data mining, profiling, and advertising;

2) Security: Encryption, password reset processes, and other areas where issues could arise;

3) Compliance: With laws like COPPA and FERPA;

4) Safety: Digital footprint or site interaction issues.

Fitzgerald also reports that the platform is entirely under the Creative Commons license, thus ensuring that it’s accessible to anyone and at no cost.

So, Who’s Got The Best Privacy Practices?

In the reviews, some products rate better in certain categories than others. Even so, Fitzgerald names classroom management and messaging platform ClassDojo as one of the best performers. “ClassDojo has done a really good job in the way they structured their terms,” he says.

But there’s a caveat related to implementation, he notes: “If a messaging app is being used to convey special education information, for example, potential issues play out in the usage of it. Any potential assessment of risk has to take the user’s role into account.”

Fitzgerald, in fact, feels quite strongly that while some of the responsibility for privacy practices falls on companies, some must also fall on educators. “Let’s say there’s an app that uses email notifications... It should be encrypting emails, but if a teacher knows that an app isn’t encrypting emails, he or she should think twice about what they send.”

And, Fitzgerald adds, there’s one more important element to this privacy conversation: Since product usage varies by school and district, what may be one school’s answer may be another’s solution.

“As we’re doing these evaluations, everybody is looking for an absolute answer to the question of ‘Is this safe or not?’ Fitzgerald says. “But that doesn’t exist. It’s going to vary context by context.”