The adage “try before you buy” applies to purchasing clothes and cars, but not so for education technology. Administrators sometimes buy software without any consultation with educators and students. Adventurous teachers may use new tools themselves without informing the tech department. Then there are technical issues: How exactly will a new app be deployed to 30 devices? And who will make and manage student accounts?

The list of woes seem endless. But Clever hopes its new program can help with some of the problems. Since March, the San Francisco-based startup has invited a dozen companies and 18 districts to try “Clever Co-Pilot,” a program that organizes 30-day tests to inform purchasing decisions. Today, the program will be open to anyone interested.

“Districts need an easy way to try software in the classroom,” says Dan Carroll, Clever’s co-founder and Chief Product Officer, who speaks from experience. In his previous role as technology director at a charter school, roughly half of the tools he purchased were “unused or under-utilized.” Back then he wanted to run several dozen pilots, but dealing with the logistics for just four “almost killed me,” he quips.

Since its launch in 2012, Clever has developed APIs that sync student roster data across schools’ student information systems, making it easy for educators and companies to provision user accounts. This technology allows students to log in to different apps with a single credential. Having these pipes in place to simplify the implementation process, “opened up new ways to piloting new products,” Carroll tells EdSurge.

With more than than 53,000 schools and 220 companies currently using Clever, the Co-Pilot program aims to not only help facilitate more pilots—but purchases as well.

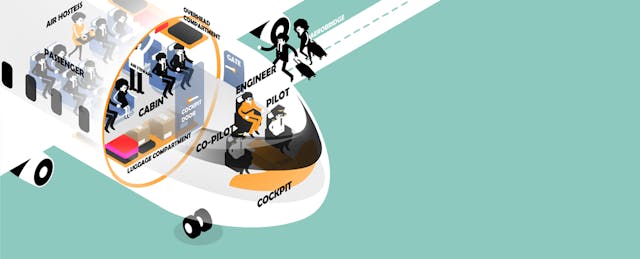

Flight Preparation

Here’s how Co-Pilot works. Administrators share what tools they are looking for—a supplemental math tool for middle-school students, for instance. Based on this request, the company offers suggestions from a list of products. (Both the school and the provider must already be synced with Clever.) The school can choose which ones to try, after which Clever will provision user accounts and documentation on how to use the tool.

There are currently 12 tools available through the Co-Pilot program, covering areas such as coding, English language arts, math, science and typing. Expect this number to grow. For now, companies won’t have to pay extra to participate in Co-Pilot. (They will still pay Clever the regular monthly fee to use its API to connect to schools.)

Throughout the 30-day pilot, teachers and students will have access to the full product—not just a basic version or demo account. Afterwards, Clever will provide administrators with a report on how frequently an app was used along with quantitative and qualitative feedback from teachers and students. This data is designed to “help districts make informed decisions,” says Carroll, who adds that “we’re not dictating how schools should purchase.”

For Jin-Soo Huh, Personalized Learning Manager at Alpha Public Schools, a well-run pilot should offer insights on a product’s ease of use and “whether teachers are quickly able to access and act on the data.” A 30-day pilot is an appropriate length; any shorter, and students “may show increased engagement in a tool just because it’s new.” His charter network will be piloting a typing program through Co-Pilot later this year.

As districts move from pilots to purchase, the program naturally offers a new revenue channel for Clever and its companies. “Generating sales leads is the number one request from our partners,” Carroll shares. Clever will take a cut from all sales that happen as a result of the Co-Pilot program. (He kept mum on the exact percentage).

So far 18 districts have completed or are going through Co-Pilot. Six of them have made a purchase, including Morgan County Schools, which decided to buy MobyMax. “Trying to get teacher feedback and student feedback can be challenging and takes time,” Casey Morgan, the district’s Instructional Technology Coordinator, said in a prepared statement. Working with Co-Pilot “allowed us to lay the groundwork, set up the teachers for the pilot, and leave the data and feedback gathering process to Clever.”

Before ‘What Works’

Interest in pilots is soaring. Many schools, lacking reliable data on product efficacy, turn to these tests for a glimpse of how a tool might work, and whether teachers and students will actually use it. According to a recent Digital Promise study, 62 percent of administrators use pilots to inform technology purchasing decisions.

Regional education groups have also organized variations of “short-term efficacy trials” to help schools figure out what’s worth buying. The Silicon Valley Education Foundation has held “iHub Pitch Games” where educators tire-kick tools for three months. (Here’s its latest report.) New York City’s iZone invites teachers to try new tools through its “Short-Cycle Evaluation Challenge.” LEAP Innovation conducts year-long pilots in Chicago Public Schools and recently published results on literacy products.

Even the federal department of education is joining the fray: last year it issued a Request for Proposal for new ways to conduct “rapid-cycle technology evaluations.” The department has picked Mathematica Policy Research to create a set of tools that helps districts evaluate products.

Carroll makes no claim that Clever Co-Pilot will help schools figure out what is effective. In fact, he thinks the industry may be getting a tad too hung up on the efficacy question.

The “bigger problem that people aren’t talking about is around utilization,” he tells EdSurge. After all, it may be premature to judge how well a product “works” if it is used infrequently—or not at all. More usage data can offer better insights into not just whether a tool is effective, but under what circumstances and for whom. With its APIs—and now Co-Pilot—Clever is sitting on a potential goldmine of login and usage data that could make these analyses possible. These insights are valuable not just for educators but also for entrepreneurs refining their tools.

The company has no immediate plans to dive into this data. For the time being, “what Co-Pilot is intended to do is make sure that whatever tools are purchased are already loved and will be used,” says Carroll.