Computational thinking is one of the biggest buzzwords in education—it’s even been called the ‘5th C’ of 21st century skills. While it got its start as a way to help computer scientists think more logically about data analysis, lately it’s been catching on with instructors in a diverse number of subjects—from science to math to social studies.

One reason for its emerging popularity? It’s engaging.

“Ask yourself, would you rather get to play with a data set or would you rather listen to the teacher tell you about the data set?” asks Tom Hammond, an associate professor for the teacher education program at Lehigh University, in Pennsylvania. “Most are more interested in getting hands-on, even if it's just looking at the map and saying, ‘What about this?’”

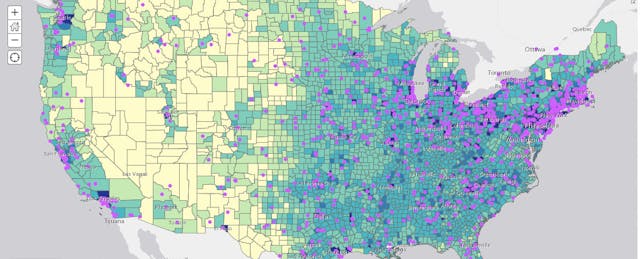

Hammond is a social studies teacher by training with a natural interest in computer science, a subject he’s also taught. Now he’s championing a new approach to his subject that combines computational thinking with data visualization tools, like geographic information systems, or GIS, which pair maps with layers of data—so that users can see, say, election results by county or state borders in the colonial era.

Being able to use data tools is important. But at its core, computational thinking is simply a way to process information using higher-order or critical thinking, says Julie Oltman, who also teaches at Lehigh and is collaborating with Hammond on a related social studies curriculum.

Whether it’s taught in coding class or social studies, the framework is the same: look at the provided information, narrow it down to the most valuable data, find patterns and identify themes. And it works just as well for comparing maps, blocks of code or two works of literature.

“There’s sort of an efficiency there in encouraging this sort of critical thinking across disciplines,” Oltman says. “Same framework, many scenarios.”

Wired for Learning

Document-based questions have long been a staple of social studies classrooms—those exercises that ask students to analyze a given text and assess it critically, looking at things like the author’s motivation and intended audience. As computational thinking requires students to actively work with the data, they end up asking similar questions, or even noticing flaws. The goal, in other words, is to get students to learn not to take everything at face value.

“It kind of gives them direction and cues them into the subtleties,” says Hammond, which they might not realize if the same information is presented to them as fact.

Students also end up doing much of the learning on their own.

Since the human brain is essentially wired to recognize patterns, computational thinking—somewhat paradoxically—doesn’t necessarily require the use of computers at all. Take one of Hammond’s favorite activities, which can be done in a single class period, or be stretched out into a larger project.

First, “I ask students to name five states,” he says. “Say, Virginia, Maryland, Pennsylvania, New York and Massachusetts.” The first four get added to a column on the left side of the whiteboard; Massachusetts gets its own column on the right. “Then another student gives North Carolina, South Carolina, North Dakota, South Dakota and Illinois.” The Carolinas get added to the same column as Virginia and Maryland. But the last three states get sorted into the same bucket as Massachusetts.

After some trial and error, students may discover that what sorts the states on the whiteboard is not, say, geographic proximity or date of entering the union. But rather, the etymologies—or place name origins—of state names. Those derived from European languages get placed on the left; those with Native origins on the right.

“You can do that with paper and what's inside students' heads,” Hammond says, adding that along the way they do learn geography and why certain regions have more Spanish, indigenous or British toponyms. From there, students can have fun with it. They can drill down into counties or expand to Canadian provinces, where they can contrast English and French names.

Another activity takes advantage of GIS software, and has students analyzing civil war battle sites by year. Patterns emerge once students notice where early battles were fought, versus ones that took place later in the war, when the Union armies attempted to encircle the Confederacy at Richmond.

The Core of Computational Thinking

In a 2006 paper for the Association for Computing Machinery, computer scientist Jeanette Wing wrote a definition of computational thinking that used terms native her field—even when she was citing everyday examples. Thus, a student preparing her backpack for the day is “prefetching and caching.” Finding the shortest line at the supermarket is “performance modeling.” And performing a cost-benefit analysis on whether it makes more sense to rent versus buy is running an “online algorithm.” “Computational thinking will have become ingrained in everyone’s lives when words like algorithm and precondition are part of everyone’s vocabulary,” she writes.

But Hammond urges taking a step back—perhaps “backtracking” in Wing’s parlance—to terms that are more readily accessible. “Nobody who isn’t sitting down coding or doing math or physics is going to be like, ‘yes, algorithm construction,’” he says. “So I don’t use that word when I bring it into social studies. But I am saying, ‘I’m making a rule and seeing if it holds up.’”

In that way, “a lot of the more techy bits of computational thinking get subsumed,” Hammond says. What you’re left with are three main steps:

Looking at the data: Deciding what’s worth including in the final data set, and what should be left out. What are the different tools that can help manipulate this data—from GIS tools to pen and paper?

Looking for patterns: Typically, this involves shifting to greater levels of abstraction—or conversely, getting more granular.

Decomposition: What’s a trend versus what’s an outlier to the trend? Where do things correlate, and where can you find causal inference? “Once you’ve got your head wrapped around that pattern, you can generate your rule,” Hammond says, and start exploring whether that rule holds only for a single instance or in a broader context.

“Data patterns and rules are the ways we are packaging computational thinking for social studies,” he adds. “If I was in literature or English language arts, I might come up with a different framing.”

Cause and Effect

Shannon Salter is a social studies teacher at Building 21 High School in Allentown, Pa., who has collaborated with Hammond on projects related to geospatial tools. For the past few years, she’s been working on a curriculum that combines environmental science with city planning, and what she calls “smart design” of urban areas. Naturally, it involves a lot of computational thinking.

Part of the curriculum asked students to analyze neighborhood maps of their city using a geospatial tool called ArcGIS. Students toggled color-coded overlays related to crime rates, vacant houses, tree coverage and proximity to services, such as medical care and schools. Once they had their data, they looked for patterns to make predictions: Is there a relationship between green space and crime levels? What about median income and access to healthcare?

Students very quickly noticed a well-recognized correlation between green space, tree canopy and crime rates. But their prediction was unique. “When we asked students to make a prediction before we looked at the data, they said neighborhoods with higher trees would have higher crime because there would be more places for criminals to hide behind the trees,” Salter says. “We went to test the prediction and they noticed the exact opposite was true.”

That immediately led to a snag. Students had the data, and the tools to analyze it. But part of the decomposition process requires separating correlation from causation. And some early solutions saw students proposing planting more trees in neighborhoods as a way to reduce crime—when in fact, crime rates are high in neighborhoods that lack the resources for a widespread tree canopy.

“We spent quite a bit of time making sure they knew the difference between noting the relationship and assuming causation,” Salter says. “It definitely took some practice to help them understand the difference between just finding a relationship and then a cause-and-effect relationship.”

Crossing Subject Lines

For Salter, assessment looks a bit different than it might in other schools. Building 21 uses an innovative, mastery-learning model that is less wedded to unit and testing cycles than in other schools. Students are often graded on a custom rubric that crosses disciplines called a “continuum of skill development,” that scales with students, and provides more scaffolding for younger students.

It’s also flexible and capable of crossing subject lines, meaning Salter can pull from math and English language arts standards for projects and add them to her rubrics.

The goal, Salter says, is to better help students answer the age-old question: How will I use this knowledge in the real world? “Everything they've learned can be applied in multiple contexts,” she says.

Yet Hammond says blending computational thinking into the curriculum need not require such a major overhaul. It can be inserted on a lesson-by-lesson basis and only where it makes sense.

“The social studies curriculum is already an impossible beast—too broad and too shallow, and it’s hard to fit anything in,” Hammond says. “If I was to say roll up your existing curriculum and do this? No way” would it be possible, he adds. Instead, teachers may be best served slotting it in as a curricular enhancement where it makes sense.

“I’m not saying throw out your other stuff,” he says. “I’m just saying slide this into your other stuff.”