One of the nice things about adaptive learning platforms is that their strengths and weaknesses are apparent. On one hand, you have a system that instantaneously evaluates responses and tailors exercises to a student's unique strengths and weaknesses. On the other hand, there's little in all that computing power that can identify non-cognitive reasons why a student fails to get the correct answer. For example, if a student appears to suddenly give up, he could be exhausted from a string of taxing problems, bored from a string of repetitive problems, or daydreaming about his after-school plans. A teacher might pick up on these things, but it's unlikely a computer would detect them.

Of course this is merely how things stand at the moment. The future has the potential to be quite different, and that possibility is neatly illustrated by some new research led by Tilburg University's Marije van Amelsvoort. The study, which will appear in the May issue of Computers in Human Behavior, examined whether intelligent tutoring systems have the potential to determine a student's emotional state by observing their facial expressions.

The researchers began by first attempting to establish whether it was even possible to glean information about problem difficulty from facial expressions. They asked adult participants to view videos of 2nd and 5th grade students who were attempting to solve easy or difficult arithmetic problems using a game developed by Nintendo. The participants then rated the difficulty of the problems based strictly on the audio, video, or both. The researchers found that adults did in fact give significantly higher difficulty ratings when observing children working on problems that were more difficult. In a follow-up experiment the adults observed only the single second before an answer was given rather than the whole video clip. This caused their performance to decline, but their propensity to give higher difficulty ratings to more difficult problems remained statistically significant.

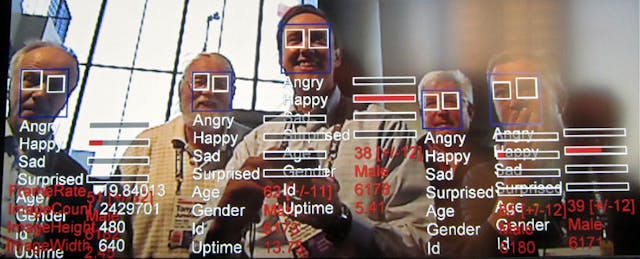

Having established that facial expressions contained information about problem difficulty, the researchers next attempted to find out if a computer program could use facial expressions to accurately identify the level of challenge posed by a math problem. In order to make things as difficult as possible for the program, only the first second of video was analyzed. This prevented the program from obtaining information from pauses, which tend to be very indicative of difficulty.

Using a method called “Active Appearance Models,” a total of 111 video "fragments" were analyzed using Matlab. Instead of analyzing specific facial expressions, which is what the human participants attempted to do, the computer program focused on whether head movements were vertical or diagonal. The program would first choose a single fragment as the "test fragment," and then use the remaining 110 fragments to train itself on how to analyze the test fragment. This was repeated 110 times, until each fragment had a turn as the test fragment.

Across all the video fragments, the program correctly identified whether a problem was easy or difficult 71% of the time. Given the bare bones nature of what the program had to work with--namely, a single second of video and a bank of only 111 videos--merely saying that the performance was significantly better than what would happen by chance doesn't do it justice. As the researchers explain, with more work this kind of technology has the potential to accomplish a great deal:

We are encouraged by our result because, theoretically, it suggests (1) that computational analysis may lead to the discovery of hitherto unnoticed non-verbal behavior patterns, and practically (2) that it is possible to automatically estimate the experienced difficulty from facial expressions, which is of relevance for automatic tutoring systems.

The study is also a nice example of how adaptive learning systems and human teachers are complements rather than competitors. Identifying motivational or emotional issues through facial recognition is most helpful when it leads to a teacher being alerted to the situation and given the responsibility to put the student back on track. Without the skills possessed teachers, it will be hard for this kind of technology to have a direct effect on student learning.

By breaking problems down into small steps and measuring the time it takes students to work through them, cognitive tutors can already get some idea of how students are coping with an assignment. But the ability to read facial expressions adds a new dimension to the feedback loops used to guide instruction. Ultimately the type of technology used in the study may be able to identify affective states that don’t have standard manifestations in student work, such as boredom, frustration, and fatigue. The researchers are quick point out that this technology remains largely theoretical, but their work is a sign that adaptive learning systems are improving in areas far beyond their token strengths.