There have been a lot of questions--and anxieties--over what the rollout of the Common Core-aligned tests will mean for schools and students. EdSurge’s Tony and Leonard caught up with Tony Alpert, COO of SmarterBalanced (one of the two companies currently designing the assessments), to try to get some answers. Here’s what we discussed:

In what ways are the SmarterBalanced tests that you’re developing 'next-generation assessments'? What do you mean by that?

I’d say there are three aspects to this: design, technology, and content.

Design

This is one of the first large-scale efforts at designing assessments, and we will be using deliberative system to look at best of past assessments to inform how we build new ones. There are 26 states working with us, and we are trying to build consensus from a design perspective. Over 80 different people are collaborating on this, including the various state departments of education and all the affiliated educators, teachers, and contractors who will write question items, draft achievement level descriptions, brainstorm appropriate scoring methods and pass rates, and decide how to implement this on a technology platform.

Technology

This will be the largest open-source adaptive testing platform that’s ever been administered at this level, and it will provide new capabilities for many states. The platform consists of three components: summative assessment, interim assessment, and a formative assessment practice. The summative assessment will provide end-of-the-year measures for college and career readiness. The interim assessments can optionally be delivered at different intervals throughout the school year and are non-secure, meaning teachers can review individual questions and student responses [as a feedback mechanism]. The formative assessment practice is more like professional learning for dealing with Common Core and delivering high quality instruction. Among the various projects in the pipeline, in addition to this platform, are a data warehouse, digital library, and item-authoring tool.

Content

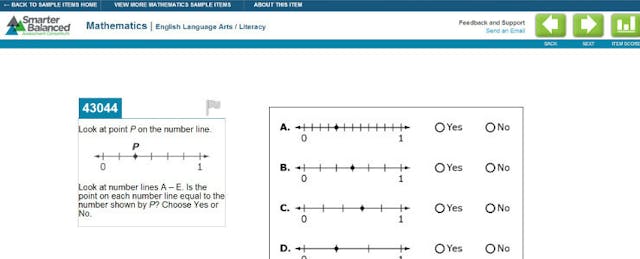

There will be a significant amount of opportunities for students to apply their knowledge in a constructed response way, rather than just filling out facts. Students are going to interact with the content on the screen utilizing tech-enhanced features like drag-and-drop and others that help them demonstrate the ability to analyze and organize. The reason why we're able to incorporate constructive response is because of the economies of scale when states work together. We will also incorporate AI scoring alongside scoring by educators. We will be conducting pilots in 2013 to see the extent to which we can rely on AI scoring--and see what kinds of questions will require the eyes of a teacher.

How integral to the success of Smarter Balanced is delivering the test on a technology platform?

Our minimum requirements aim to ensure that the total cost of [tech] ownership as as low as possible. We currently have an architecture review board in charge of keeping tabs on the various technology profiles of schools.

We have committed to using tablets, including iPads and those that run on Windows and Android, and are currently focused on implementing 10-inch screen.

We’re also aware of bandwidth issues and are keeping an eye out for technologies that can fully utilize the breadth of HTML5. We continue to meet with leading industry partners like Microsoft, Apple and Google to think about how tech is likely to change in the next couple years, and what we can do to prepare for it. Important questions we discuss are:

- Are there hardware that we currently don’t know about that could help assess kids better?

- Are there item types that need to be assessed and will require hardware that’s not invented yet?

- Will students [and schools] have access to such hardware?

By 2016-2017, we do expect that “natural-based interfaces” (devices supporting stylus and/or touch) will be required, if total cost of ownership is feasible. These interfaces will help with problems that require students to draw figures or charts, or describe their thought process in a way that can’t be described in words.

How has the recent guidance on minimum technology specs for schools been received so far? Who is happy and who is upset? Do you see these minimum specs being too far a reach for any districts or states?

We haven’t heard of any complaints; it could be that it’s not yet on the district’s radar. The specs were developed in collaboration with many states and we’ve looked at the battle scars of schools that have been conducting online testing for awhile, so we’re taking all of that into consideration.

What is Smarter Balanced doing to help states identify and close the gaps in what their schools and districts need in terms of technology and what they have? If they require assistance to procure hardware, what advice or resources would you direct them to?

We are not a technology broker. But our premise is that edtech has never been standardized before, and that by offering a common tech standard, schools and districts will be in a better position to procure the appropriate technologies.

Are you concerned, as some educators and observers are, that we will see poor results from these new tests?

I wouldn’t say I’m worried per se that scores are going to be lower. People have to understand that this is going to be a new test, and it’s going to raise the bar. The biggest challenge, I think, is communicating this clearly enough. We’ve released a massive amount of details on the website to make sure that this is the most transparent design and development of an assessment system ever. We’ve released system architecture, technical framework, minimum specs, weekly newsletters, monthly summaries, and the likes. We’ve also presented at events and conferences around the nation.

I believe that there’s a joint model of responsibility between educators and districts and states to try to understand how the assessments will be used. There is an obligation on the part of local districts to disseminate information about our assessment, which is available on our website, to help prepare teachers.

What should we expect for the pilots in 2013?

The pilots will start in late February, and we expect it to involve about 11% of the schools in our partner states. About a million students will take either a math or reading portion. Our goal is to try out innovative items and see what works. Some of them are not going to work well--and that’s OK. We’re hoping some items fail, because that means we’re pushing the edge of what’s practical and meaningful. These pilots will end around May, after which we will analyze results in preparation for the real test that will come in 2014.